How We Used AI to Hire Our First Developer

We hired our first developer last week. The whole process, from writing the role spec to evaluating candidates, leaned on AI more than anything we've done before.

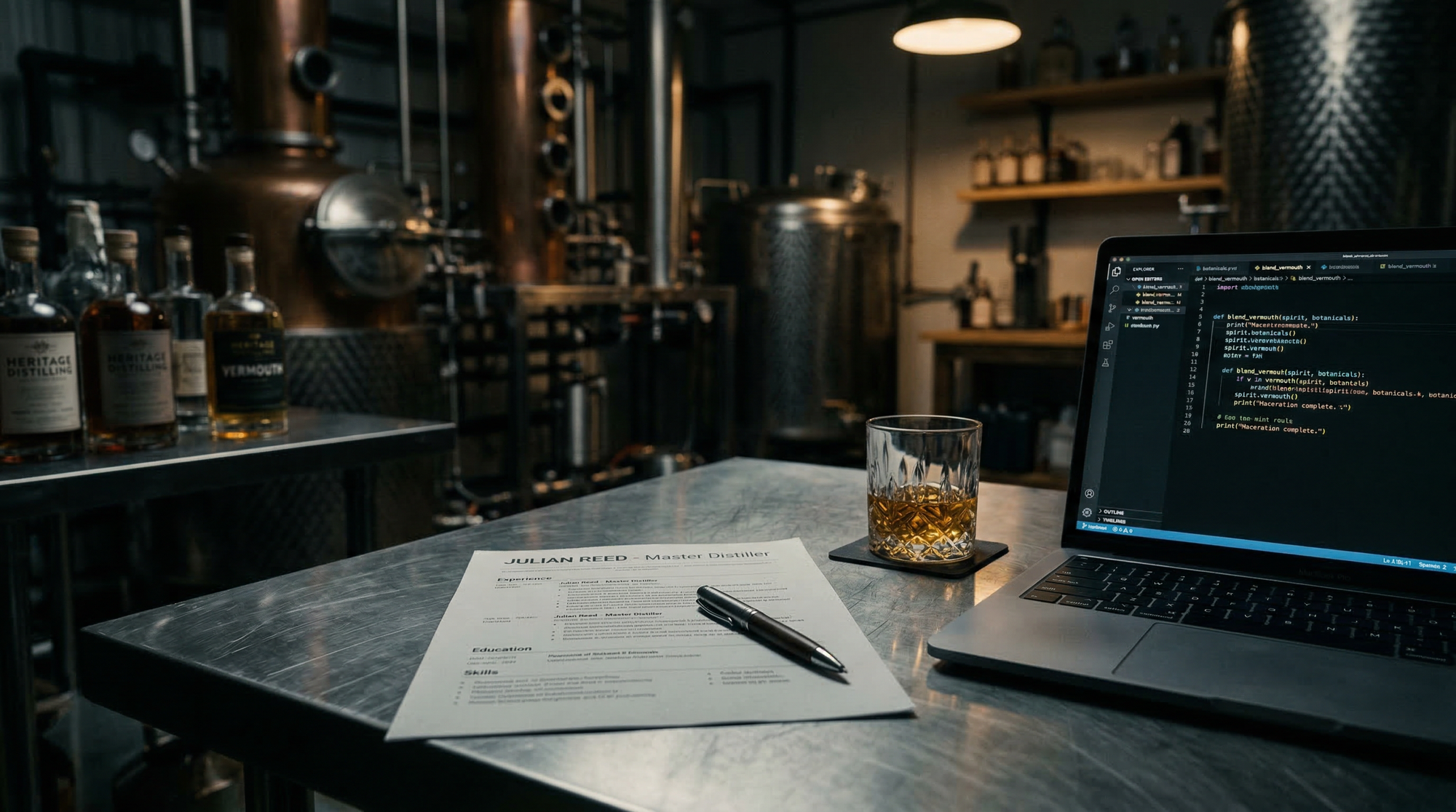

We hired our first developer last week. First ever technical employee at Absolution Labs, in fact. And the whole process, from writing the role spec to evaluating the final candidates, leaned on AI more than anything we've done before.

This isn't a post about recruitment software or applicant tracking systems. It's about what happens when a non-technical founder needs to hire technical talent and uses AI to bridge the gap. The actual decisions, the things that worked, the bits where human judgment still mattered more than any model's output.

The bottleneck audit

Before we even thought about writing a job ad, we had to figure out what was actually broken.

Here's the reality of running a six-person drinks company. Everyone wears too many hats. One person is trying to be a full-time marketer, a full-time ecommerce manager, full-time sales, full-time admin and a full-time blender and paster. You simply can't do all of those things well. Something always gives, and usually it's the work that would have the biggest long-term impact on the business.

So we ran what I've started calling a bottleneck audit. We listed every regular task across the team that has a positive impact on the business, then flagged the ones where a human bottleneck was limiting how often or how well those tasks got done. Not a spreadsheet exercise for its own sake. A genuine attempt to see where the constraint was sitting.

What emerged wasn't a developer job description. It was a map of friction points, the places where manual work was eating time that could be spent on production, on customers, on the things we actually got into this business to do. The role we needed sat at the intersection of those SME business challenges and the technical oversight that AI and automation can bring. That intersection became the job spec.

Writing the spec with AI

I fed the bottleneck audit into Claude. Not the polished version, the messy notes. Task lists, frustrations, half-formed ideas about what "better" would look like. Then I asked it to help draft a role specification based on the outcomes we wanted rather than a list of technologies.

This is where AI was genuinely useful. I'm not a technical founder. I know what problems need solving but I don't always know the right language to describe the technical skills that would solve them. The AI helped translate business pain into technical requirements in a way that would make sense to candidates who think in systems and architecture.

It also suggested things I wouldn't have thought to include. Risk mitigation competencies. Compliance awareness around PII and GDPR. Security considerations for the automations we're building. Not because I don't care about those things, but because I wouldn't have known to frame them as explicit requirements in a job ad.

Fair caveat: the first draft it produced was corporate nonsense. Generic tech company language that would've attracted exactly the wrong kind of person. We rewrote it heavily to sound like us, because the spec needs to filter for culture fit as much as technical ability. You're joining a team of six in a South London workshop, not a Series B startup.

The layered interview

This is where it got interesting. We ran a two-stage process, and the split between human and AI judgment was deliberate.

Round one was purely human. No AI involvement at all. We wanted to understand communication style, personality, how someone translates between old-school manufacturing processes and modern technical methods. Integrating into a team of six is never easy, and no amount of technical skill compensates for a bad fit. That read has to come from people, not models.

Round two brought AI into the assessment. We used it to generate a technical project brief, a real-world task based on the kinds of systems we're actually building at Absolution Labs. Candidates took the brief away, worked on it, came back with their approach. Then AI helped evaluate the responses.

Specifically, it rated technical performance, problem-solving methodology and the strength of architectural decisions. It ranked candidates against each other and, critically, flagged the absence of things we might not have noticed. Did the candidate think about data privacy? Did they consider what happens when the system fails? Did they address compliance? A University of Washington study found that humans tend to mirror AI hiring recommendations around 90% of the time, even when those recommendations contain bias. We were conscious of that risk, which is partly why the human round came first and why we never let the AI make the final call.

Seeing through the AI layer

Here's the part I found most fascinating, and I think it says something broader about where hiring is heading.

Most of our candidates used AI tools to help with their responses. We knew they would, and we encouraged it. The ability to work effectively with AI is literally part of the job description, so penalising someone for using it in the interview would've been absurd.

But beneath the surface of those AI-assisted responses, we wanted to understand the architecture. How well could each candidate add their own judgment and experience on top of what the model gave them? Could they explain why they chose one approach over another, or were they just presenting the first thing ChatGPT suggested with a bit of formatting?

That distinction, the thinking on top of the tool, turned out to be the clearest signal of quality. Several candidates had similar surface-level technical responses (because they were often using the same AI tools). The separation came from who could articulate the reasoning, who spotted the risks the AI missed, and who had opinions that came from actual experience rather than a well-prompted output.

What this means for small producers

I'm writing this up because I think the framework applies well beyond our specific situation.

If you're running a small business and you've never made a technical hire, the knowledge gap can feel paralysing. You don't know what good looks like, and you know you don't know. Traditionally that means either hiring a recruiter (expensive) or leaning on someone in your network who does know (if you're lucky enough to have that person).

AI gives you a third option. Not a replacement for human judgment, but a bridge across the knowledge gap. It can help you translate business problems into technical requirements, generate assessment materials you couldn't write yourself, and evaluate responses against criteria you understand but couldn't articulate on your own.

The best guidance on hiring developers in 2026 still says that if you're non-technical, you need someone you trust to evaluate candidates. That's true. But "someone" can now include AI as one voice in a panel rather than the sole authority. It's another layer of input, weighted alongside your own instincts about who this person is and whether they'll thrive in your environment.

The important bit is the layering. Human first for the things humans are good at (reading people, sensing fit, understanding context). AI for the things it's good at (systematic evaluation, red flag detection, technical benchmarking). Neither one on its own would have given us the confidence we needed. Together they worked.

The hire

We made the offer last week. Won't say more than that because the person hasn't started yet and deserves their privacy. But the process felt right in a way that previous hiring rounds haven't. More structured, more thorough, and more honest about the limits of our own expertise.

The bottleneck audit has already proved useful beyond hiring, by the way. We've started using the same framework to decide where to point AI tools generally. List the tasks, find the constraint, ask whether a human bottleneck can be removed. It's a surprisingly good filter for separating genuine AI use cases from the stuff that just sounds impressive in a pitch deck.

The workshop's still the same. Six people, tight margins, botanicals everywhere. But now there'll be seven, and the way we found number seven was genuinely new.

Frequently asked questions

Can a non-technical founder use AI to hire a developer?

Yes, but as a layer of support rather than a replacement for human judgement. AI can translate business pain into technical requirements, generate assessment briefs, and flag gaps in candidate responses. The final call should still come from people who understand the team and culture fit.

What is a bottleneck audit for small businesses?

A bottleneck audit maps every regular task across your team and identifies where human constraints are limiting output. It reveals which roles or tools would have the biggest impact, helping you hire or automate based on evidence rather than assumptions.

How should small companies assess developer candidates using AI?

Use a layered approach: human-first interviews for culture fit and communication, then AI-assisted technical evaluation. AI is good at systematic scoring, spotting missing considerations (security, compliance, failure modes), and benchmarking candidates against each other. The key signal is whether candidates can think on top of AI tools, not just present AI-generated answers.

Is it ethical to use AI in hiring decisions?

It can be, with deliberate safeguards. Research from the University of Washington found humans mirror AI hiring recommendations roughly 90% of the time, even when biased. Running the human assessment first, before AI input, helps preserve independent judgement and reduces the risk of algorithmic bias shaping the outcome.

Robert Berry is co-founder of Asterley Bros, a London-based premium aperitivo company, and Absolution Labs, an AI automation consultancy for drinks businesses. He makes vermouth by day and builds AI systems in the margins.